SIFT: A new tool for detection of test fraud

Test fraud is an extremely common occurrence. We’ve all seen articles about examinee cheating. However, there are very few defensible tools to help detect it. I once saw a webinar from an online testing provider that proudly touted their reports on test security… but it turned out that all they provided was a simple export of student answers that you could subjectively read and form conjectures. The goal of SIFT is to provide a tool that implements real statistical indices from the corpus of scientific research on statistical detection of test fraud, yet is user-friendly enough to be used by someone without a PhD in psychometrics and experience in data forensics. SIFT still provides more collusion indices and other analysis than any other software on the planet, making it the standard in the industry from the day of its release. The science behind SIFT is also being implemented in our world-class online testing platform, FastTest. It is also worth noting that FastTest supports computerized adaptive testing, which is known to increase test security.

Interested? Download a free trial version of SIFT!

What is Test Fraud?

As long as tests have been around, people have been trying to cheat them. This is only natural; anytime there is a system with some sort of stakes/incentive involved (and maybe even when not), people will try to game that system. Note that the root culprit is the system itself, not the test. Blaming the test is just shooting the messenger. However, in most cases, the system serves a useful purpose. In the realm of assessment, that means that K12 assessments provide useful information on curriculum on teachers, certification tests identify qualified professionals, and so on. In such cases, we must minimize the amount of test fraud in order to preserve the integrity of the system.

When it comes to test fraud, the old cliche is true: an ounce of prevention is worth a pound of cure. You’ll undoubtedly see that phrase at conferences and in other resources. So I of course recommend that your organization implement reasonable preventative measures to deter test fraud. Nevertheless, there will still always be some cases. SIFT is intended to help find those. Also, some examinees might also be deterred by the knowledge that such analysis is even being done.

How can SIFT help me with statistical detection of test fraud?

Like other psychometric software, SIFT does not interpret results for you. For example, software for item analysis like Iteman and Xcalibre do not specifically tell you which items to retire or revise, or how to revise them. But they provide the output necessary for a practitioner to do so. SIFT provides you a wide range of output that can help you find different types of test fraud, like copying, proctor help, suspect test centers, brain dump usage, etc. It can also help find other issues, like low examinee motivation. But YOU have to decide what is important to you regarding statistical detection of test fraud, and look for relevant evidence. More information on this is provided in the manual, but here is a glimpse.

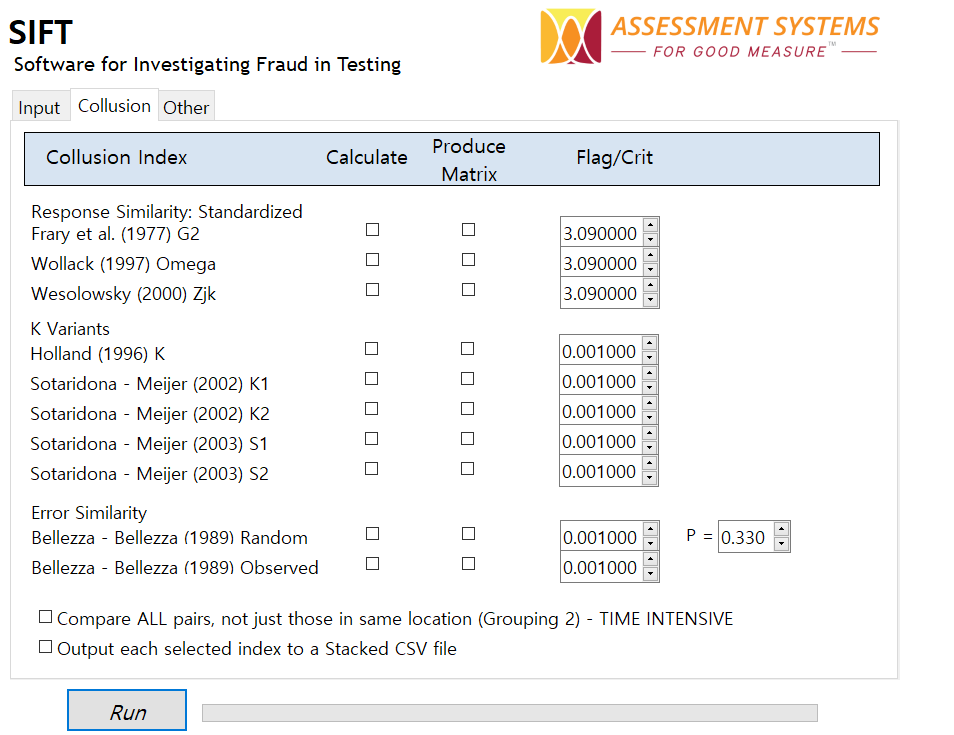

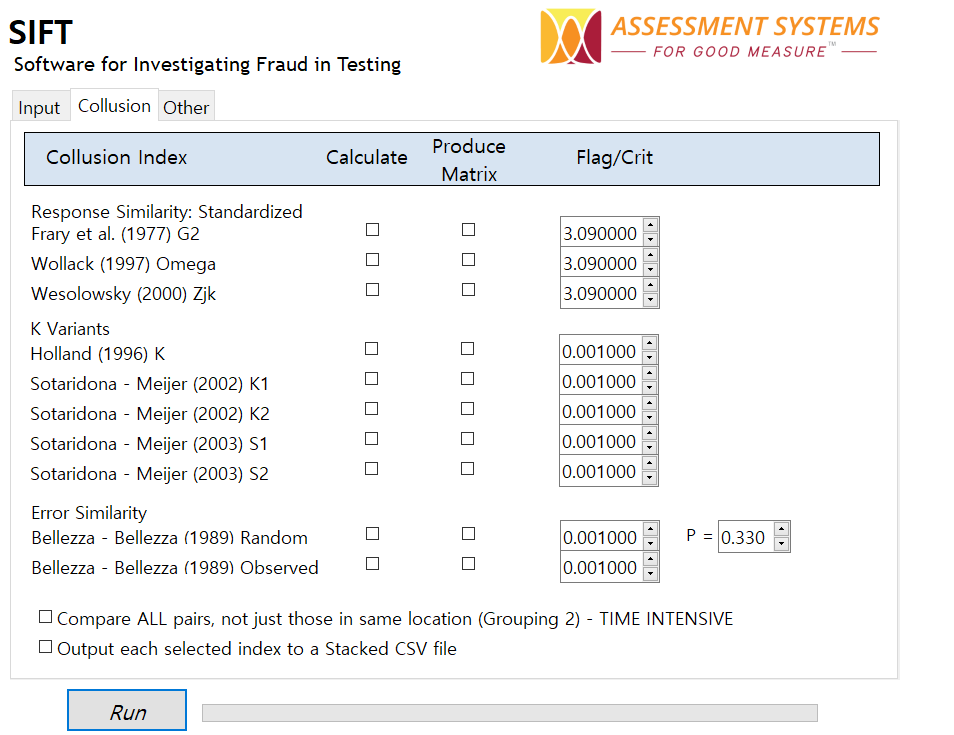

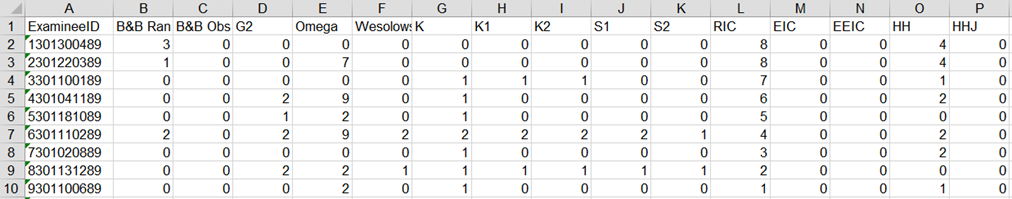

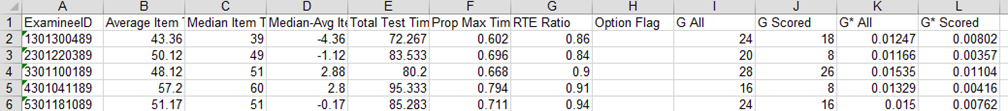

First, there are a number if indices you can evaluate, as you see above. SIFT will calculate those collusion indices for each pair of students, and summarize the number of flags.

A certification organization could use SIFT to look for evidence of brain dump makers and takers by evaluating similarity between examinee response vectors and answers from a brain dump site – especially if those were intentionally seeded by the organization! We also might want to find adjacent examinees or examinees in the same location that group together in the collusion index output. Unfortunately, these indices can differ substantially in their conclusions.

Additionally, you might want to evaluate time data. SIFT provides this as well.

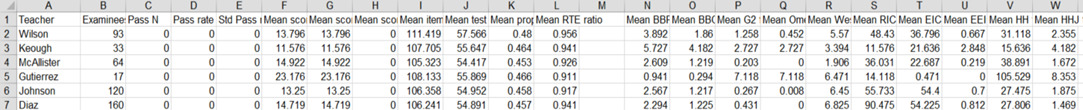

Finally, we can roll up many of these statistics to the group level. Below is an example that provides a portion of SIFT output regarding teachers. Note the Gutierrez has suspiciously high scores but without spending much more time. Cheating? Possibly. On the other hand, that is the smallest N, so perhaps the teacher just had a group of accelerated students. Worthington, on the other hand, also had high scores but had notably shorter times – perhaps the teacher was helping?

The Story of SIFT

I started SIFT in 2012. Years ago, ASC sold a software program called Scrutiny! We had to stop selling it because it did not work on recent versions of Windows, but we still received inquiries for it. So I set out to develop a program that could perform the analysis from Scrutiny! (the Bellezza & Bellezza index) but also much more. I quickly finished a few collusion indices. Then unfortunately I had to spend a few years dealing with the realities of business, wasting hundreds of hours in pointless meetings and other pitfalls. I finally set a goal to release SIFT in July 2016.

Version 1.0 of SIFT includes 10 collusion indices (5 probabilistic, 5 descriptive), response time analysis, group level analysis, and much more to aid in the statistical detection of test fraud. This is obviously not an exhaustive list of the analyses from the literature, but still far surpasses other options for the practitioner, including the choice to write all your own code. Suggestions? I’d love to hear them.

Nathan Thompson, PhD

Latest posts by Nathan Thompson, PhD (see all)

- What is a T score? - April 15, 2024

- Item Review Workflow for Exam Development - April 8, 2024

- Likert Scale Items - February 9, 2024